Series <Architect to Developers>

Even if we know who you are, this does not mean you can do anything on our territory.

How do you address security in your product? – We use JWT and OAuth2. – Why so? – Because others use them.

Introduction- What is What

Microservices are small applications realising or set around business functions; these functions are usually of a small if not the smallest scope. That is, it is assumed that these functions are not decomposable to the smaller functions/actions. Protection of business functions is a topic with a long and grey beard. Protection requirements to business functions stay the same for decades though the requirement realisation and operational environment have changed dramatically.

If you take a look at the publications about protection from 2017 to 2019, you will find a total mess in this field related to identity and permission controls. The priority is given to the speed of development and scalability while actual protection becomes immaterial. As we know, a lot of developers and authors simply repeat what others said with no much thinking. We used to trust somebody’s written opinion – this is not a pragmatic or reasonable matter, it is more of cultural habits. Any professional analyst says that mechanical repetition after others – a herd effect – is, at least, error-prone because protection context is different in every situation and what is right in one case may deeply wrong in another.

If protections solution is quick in development and scale to the sky but does not protect, it may be fine for mass-media SW, but we have many industries where such an approach is unacceptable. A logic of ‘having something that is not efficient is better than having nothing’ in the digital world means simply ‘having nothing’. If we know the problems in used protection, you can be sure that criminals are aware of them very well.

People claim that the topic of protection and terminology used in it are confusing, unusual and too abstract for immediate implementation. I understand, protection is a special area, but if it is done right, it allows you to enjoy results of your work; otherwise, your work is not done and you are at risk of continuous failures. I’ll try to use plain English instead of special terms in this article. Sill, in the beginning, I have to explain a few protection terms for professional references. Without a high level understanding of this matter, you will not be able to recognise the problem when facing it and comprehend the dangerous of it.

When you deal with someone or something, you want to identify what or who is this. The identification procedure or verification is known as Authentication. If you are not interested to know who your counterpart is, e.g. you publish a blog on Web for all interested, you do not need Authentication of your readers.

If you deal with someone or something that, as you assume, can act tangible or intangible on yourself or on your assets, e.g. this can be a doctor, a postman, a burglar, an open fire, a toxic smell, a property agent, etc. you usually prefer to set certain rules, limits for protections or, opposite, you prefer to support these actions. The process of granting your permissions to someone or something to act on you or your assets is known as Authorisation. For example, you can authorise a postman to pick up your parcel at your doorstep and take it to the post office to send it to the addressee.

Finally, when people try to hide something from public eyes, which is the basis of privacy, people protect things from those who are not permitted to find out about the hidden thing. For example, we wrap gifts to surprise people, we place our letters in envelops, we encrypt content that would be sent if this text is for the attention of only permitted people.

Thus, if we want that certain information or certain activities to be conducted or restricted, including unwrapping or decryption, we use an access control procedure. To allow someone or something to act, you have to grant or permit access to something. However, first of all, you need to recognise this someone or something. Therefore, recognition or verification of identity is a pre-condition for granting permissions. Again, if you do not mean setting any restrictions, you do not need any permissions; if do not need permissions, you do not need to verify identity of the counterpart.

Problem

For years, business protection via technology was if not an orphan then a stepdaughter. Only for the last 15 years when digitalisation started to penetrate many vital business areas, the risks for business grew up almost exponentially – the losses and the cost of protection failure started rocketing. The challenge with protection is in that it situates outside of functional domain, it crosses all domains, it requires additional knowledge and work that does not bring new money to business, but potentially can free the business from its money. For example, It is very inconvenient to business if text containing personal data may not be printed and freely circulated in the company, if a person cannot understand an encrypted text, if every time a person changes a role at work, this has to be preliminarily reflected in the centralised access control system.

Reckless business people arguing against general protection said: “Are you saying we should not trust our own people?” This was a good question 30 years ago when a person changed the job a few times over the life, but now, many business people change the job every 2-3 years and internal information easily moves to the competitor. Moreover, many researchers concluded practically the same – about 75%-80% of protection violations take place inside the companies. With model high level of intellectual psychological fraud, the only option companies have is an implementation of the concept known as Zero Trust developed by the Forrester Research, Inc. This concept is fully realised in the EU GDPR regulation of data protection, which is adopted in almost all European countries even not EU members and in the USA.

When economy digitalises, it is essential to protect our data and operations the first while developing new solutions and products in a speedy way the second. Otherwise, we risk to set up our companies and their consumers.

Now, we are ready to take a look at protection for Microservices.

Technologies for Identity & Access Controls

Functional Microservices are supposed to execute uncorrupted business functions and Informational Microservices should work with non-tempered data. In order to realise complex business functions via Microservices, the latter should interact. Isolated self-sufficient autonomous Microservices can address only the lowest-level business needs. For anything more sophisticated, we have a choice: either require a full interaction between Microservices or avoid using them at all.

To interact, Microservices have to recognise each other. If we deal with mass media applications, Microservices do not need to know who uses/engages them – an end-user or another Microservice. They have nothing to protect. The Microservice identity is only needed to manage them – which one to replace, modify or remove. This is not a case of protection and I will not use it to benchmark Microservice development performance and scalability for other industries that have protected assets.

So, we work with applications comprising Microservices where each one has its identifier – µID. In interactions via API or Events, the Microservice-requestors should provide their µID and the responding Microservice-providers have to verify the µID before reacting to the requests. Verification of µID has nothing to do with access control whatsoever.

There are a few modern and popular techniques used to support the protection of Microservice-based applications. This article deals with a different task – it is about interactions between Microservices. These techniques are OpenID Connect, JWT and OAuth. So, I am going to review each of them with the pre-condition – the values of these techniques will be challenged against Microservice-to-Microservice communication where each Microservice protects its functionality and/or resources. The Microservices can operate within the same application or across the application boundaries. The key word is ‘interaction’.

OpenID Connect, JWT and OAuth2

OpenID Connect

Wikipedia states that an OpenID Connect (OIDC) is an identity verification layer on top of the OAuth 2.0 protocol. It is used to allow Web or mobile applications “to verify the identity of an end-user based on the authentication [identity verification] performed by an authorization [access permission control] server, as well as to obtain basic profile information about the end-user in an interoperable and REST-like manner”. I do not know about you, my dear reader, but when I hear that “the authentication performed by an authorization server”, I expect the same result as from when cookies are baked by a shoemaker and boots are fixed by a baker.

It is a security 101 that the identity control may not be performed or managed by an access permission control because this creates a perfect environment for fraud and crime. No Web or mobile tasks, no speedy delivery or development convenience can change the described rule. If you violate this rule, instead of protecting your assets you just form an additional security gap. A number of people utilising violated rule does not make the rule invalid (because people dislike it) – it only makes a crowd of poor professionals and even more unprotected assets.

In the context of this article, it is a puzzle what an ‘end-user’ means in the Wikipadia’s statement and what to do with “REST-like manner” if Microservices interact via Events. Or, Microservices may not use Events due to limitations of those techniques. Is this an absurd or these techniques are unsuitable for Microservices?

“OpenID Connect allows a range of clients, including Web-based, mobile, and JavaScript clients, to request and receive information about authenticated sessions and end-users. The specification suite is extensible, supporting optional features such as encryption of identity data, the discovery of OpenID Providers, and session management”. Again, we have an unknown ‘end-user’ and, since the majority of Microservices are stateless, it is unclear what session is meant.

An OIDC uses a trusted external source to prove to a Microservice-provider that the Microservice-requestor is what it says it is, without ever having to grant Microservice-provider access to credentials of Microservice-requestor. Let’s see if this promise states.

OIDC uses tokens including access tokens and ID tokens. An ID token must be JSON Web Token (JWT). There are two primary actors in OIDC workflow: the OpenID Provider (OP) and the Relying Party (RP). The OP is a server that is capable of verifying the presented identity of the end-user and providing the verification result as a JWT to the Relying Party. The Relying Party is an entity that “relies” on the OP to handle identity verifications. Assume that RP is a Microservice. So, JWT is the core part of OIDC. Unfortunately, interactions between Microservices irrelevant to the application’s end-user; it rather depends on the Microservice owners that may be under different administrative jurisdictions. That is, applicability of OIDC to our task appears highly questionable.

JWT

“JWT is an Internet standard for creating JSON-based access tokens that assert some number of privileges or claims. The core of JWT is encapsulation and propagation of the identity of the end-user in the Web-based interactions with multiple Web/mobile applications. In essence, JWT enables a single-sign-on mechanism for Web-based applications. This means that the end-user does not need to login each individual application since every one of them can receive the user identity via JWT, which can be definitively verified to prove that it hasn’t been tampered. A JWT technique requires special attention in our case – we have to find out if JWT can be used in inter-Microservice communication.

JWT can be easily generated by anybody having just a few publicly available libraries/packages. All JWT have the same structure: header, payload and digital signature. The latter is an encrypted hash of the payload, i.e. the data carried by the token, including identities of the end-user and requestor. The encryption can be based on symmetric or asymmetric (public/private) keys. Thought the encryption algorithm can be specified in the token, the Microservice-provider may be not ready to support it because in both cases an additional information (shared secret Key or Public Key) have to be preliminarily obtained.

So, the JWT is insecure and the trust to JWT is based only on the trust to the authoritative digital signature, i.e. on the trust to the signing authority. However, since any arbitrary server can generate signed JWT, we cannot be sure that the payload had been validated by this server before generating and signing the JWT. The receiver can validate the signature using appropriate Key, but cannot validate trustfulness of any identity inserted in the token the server signed JWT has no authority for the JWT receiver. What kind of security is this? (A fake?)

While Microservices created by one team usually have the same symmetric or Public Keys, this is not necessarily the case for Microservices from different teams, applications, departments and, definitely, companies. Validation of the digital signature in JWT is a must have, but cannot be enforced. If Microservice doesn’t validate received JWTs, it still can get the payload. This means that the content can be easily altered if the receiving side does not validate JWT and does not match the content with the signature for the sake of execution performance, which is the same as losing protection.

Anyone who knows the Key can create a new JWT or read/decrypt existing one. In other words, instead of intercepting and tempering JWT, it is enough to steal the Key to get control over the JWT. Also, different encryption algorithms have different security strength meaning that Microservice-requestor can use a weaker algorithm than the Microservice-provider requires and the latter would not be able to trust the request even with a JWT in it.

The OpenID Connect specification requires the use of JWT for ID tokens in the format that contains user profile information. This helps in the Web single-sign-on, but useless in inter-Microservice communications. Consequentially, verification of digital signature in the standard way is useless as well. If we replace the end-user with the Microservice-requestor, every such Microservice has to obtain its own JWT and repeat this procedure several times because JWT includes an expiring validity time. The Microservice-provider can have its security policy that demands relatively short expiration time for JWT resulting in multiple request-denials or enforcing JWT refreshments all the time. Also, since Microservices mainly stateless, they cannot keep their JWT in cache/memory while the operations with persistent store, where JWT can be saved, become performance hits.

The only protection of content a JWT offers is data integrity. That is, anyone who obtains your JWT can read your identity and your custom information. Since generating a new JWT is not a problem, your identity can be used for producing fake JWS. Moreover, if you generate JWT with so called Public Claim, the latter should be unique to ensure no two public claims would ram. If the Private Claim is specified, the Microservice-requestor and Microservice-provider must agree on the use of it up-front. This is unrealistic in common case, especially considering that the same Microservice can change its interaction landscape and/or participate in several distributed transactions over time.

I think so many questions and gaps in the protocol and its unenforced use (scarified for the sake of performance) set serious doubts about the usability of JWT and, consequentially, about the reliability of OpenID Connect. If it so, the access permission control provided with the OAuth2 becomes also, at least, doubtful, if not unreliable.

OAuth2

An OAuth2 is an open standard for access control delegation; it is mistakenly associated with access control itself (authorisation). That is the control is done by something else. I think that it is valuable to find out what this ‘something else’ is.

The OAuth2 is effective when an end-user tries to delegate its permission to access his/her resource (set behind an API) to another user or application without disclosing personal credentials for it. There are four permission Grant Types in OAuth2 that include both delegation and direct credential submission (it is unclear why the latter is included since it is not about delegation). All of them are relatively complex except the strange Client Credentials Grant Type that grants access to itself to access its own application.

Assume there are two Microservices: Microservices M1 and M2 – they are independent and have different owners; there is no trust between them. Therefore, as a precondition for interaction, the trust has to be established via verification of identity and control of access permissions. Structures below illustrate how the workflow of an OAuth2 would look like if applied to interactions between these Microservices:

- A Microservice M2 wants to trigger functionality provided by Microservice M1

[Option 1- based on Identity Provider]

- M2 contact M1

- M1 returns URL for its Identity Provider to validate expected M2 identity

- M2 contact the Identity Provider of M1 (it is not

guaranteed that this can be done) and requests the verification of its identity

- M2 can/may receive an authorisation code from Identity

Provider confirming the identity of M2 or a denial (if M2 is unknown on this

Identity Provider)

- M2 sends the authorisation code to M1

- M1 programmatically sends this authorisation code to

its ‘owner’ (or end-user as original specification states) that plays the role

of a Permission Authority (PAuth), if can

- PAuth can authorise the M2-request with an

access/authorisation token, or deny

- PAuth returns the access/authorisation token to M2 in case of successful permission

- PAuth can authorise the M2-request with an

access/authorisation token, or deny

- M2 can/may receive an authorisation code from Identity

Provider confirming the identity of M2 or a denial (if M2 is unknown on this

Identity Provider)

// end of Option 1

[Option 2 – based on Access Control/Authorisation Provider]

- M2 programmatically contacts an Authorisation Server (OAuth 2.0 server)The OAuth 2.0 server programmatically redirects the M2 call to the M2 ‘owner’ to verify M2 identity (it is not guaranteed that this can be done) that is plays a role of Identity Authority (IAuth)The M2 ‘owner’ verifies the M2 identity, if can The M2 ‘owner’ passes the authorisation code to the OAuth 2.0 serverThe OAuth 2.0 server issues an access token using its own Permission Provider (how this Permission Provider get in knowing M2 and permissions’ of M1 is left in dark)The OAuth 2.0 server sends the access token to M2

// end of Option 2

- M2 contacts M1 again with the access token. If M1 is satisfied, it performs the request activity – starts its function.

Here are several non-trivial questions to these workflows:

- How this can work if the Identity Provider that M1 deals with wuld know about M2 and its identity?

- What is a mystique “owner” of M1?

- How this work if real-time programmatic access to the “owner” of M1 is impossible?

- What is a mystique “owner” of M2?

- How it works if the “owner” of M2 cannot pass in real-time and online the authorisation code to the OAuth 2.0 server?

- How the OAuth 2.0 server knows about M1 and its access permissions?

There are probably more questions but this is enough to conclude that without resolving the aforementioned problems it is extremely risky to apply OAuth2 to the inter-Microservice interactions. Nevertheless, to be objective, I want to mention that OAuth2 articulates one non-delegating Grant Type named the Resource Owner’s Password Credentials. Apparently, this is a classic combination of verification of requestor’s credentials and separate control of access permissions with two corresponding Providers and no “owners”. This is the case where OAuth2 has simply conquested the model known for decades before OAuth was created.

Implementation Approaches to Identity & Access Controls

The results of the conducted reviews are not optimistic and hopeful. My approach the OpenID Connect-JWT-OAuth from the perspective they were not created for may be inaccurate. That is, they may be OK for other tasks, but this does not help me. This has stimulated me to come up with alternative solutions for Microservices.

Basic Protection Method

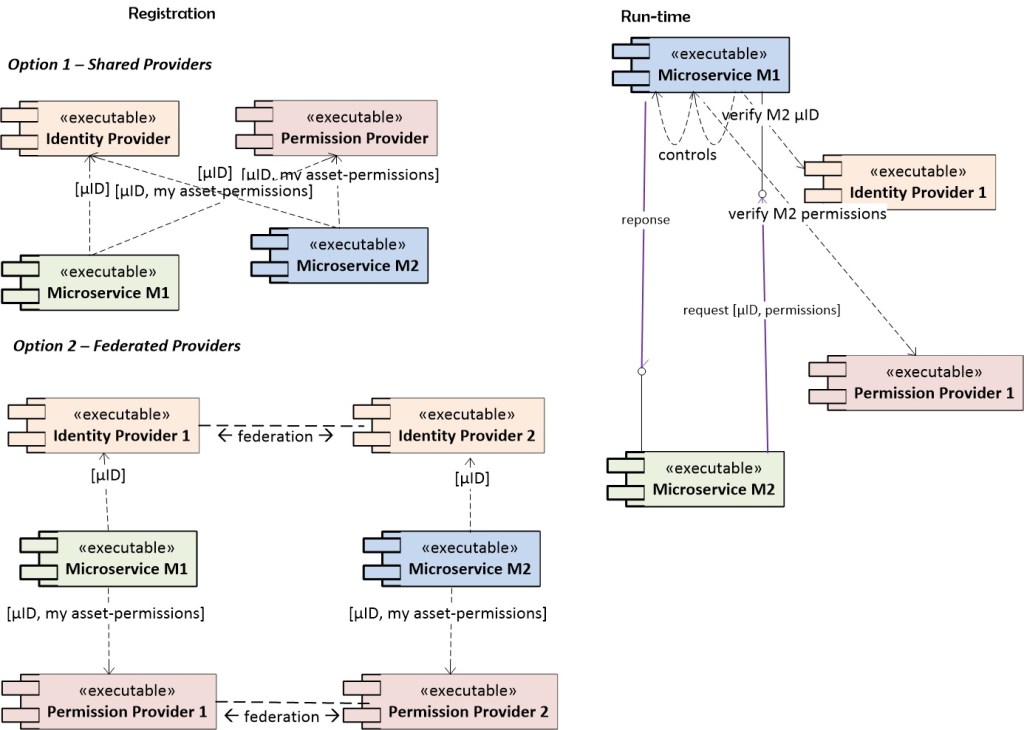

Principles of Separation of Concerns and Microservice Single Responsibility require the protection mechanism to be externalised from the Microservices. The Basic Protection Method is a classic delegation of credential verification and validation of access permission to the dedicated entities, which can be Microservices themselves. The assumption here is that Microservices interact via API and the credentials and requested permissions are encrypted regardless of the use of secured transport protocol; the Event-based interactions will be addressed separately.

For Microservices M1 and M2, the steps of the Basic Protection Method are:

- M2

wants to access/trigger resource/functionality of M1 and makes an API call. The

M2’s credentials (in any form including references to external N-factor

controls) are embedded in the call. Also, M2 specifies what it wants M1 to do

(with its function or resource), i.e. which action to perform

- M1

redirects – delegates – the M2’s credentials to its Identity Provider

- The

Identity Provider performs the credential check-up. To succeed, M2 has to be

known to this Identity Provider. The common solutions for this condition are a)

Identity Provider is shared between M1 and M2; b) each Microservice in the

interaction has its own Identity Provider and they are linked via federation

- The Identity Provider returns the results of the credential verification (Yes/No) to M1

- The

Identity Provider performs the credential check-up. To succeed, M2 has to be

known to this Identity Provider. The common solutions for this condition are a)

Identity Provider is shared between M1 and M2; b) each Microservice in the

interaction has its own Identity Provider and they are linked via federation

- If

verification is positive, M1 redirects – delegates – the M2’s access request to

its Permission Provider. All permissions regarding the M1 functionality and/or

resources are preliminarily registered in the Permission Provider. In some

cases, Permission Provider may be shared among M1 and M2

- The

Permission Provider verifies the match of the requested access with permitted

access for M1.

- The Permission Provider returns results of the match (Yes/No) to M1

- The

Permission Provider verifies the match of the requested access with permitted

access for M1.

- If

the match is positive, M1 performs request actions

- M1 returns results (if requested) to M2.

- M1

redirects – delegates – the M2’s credentials to its Identity Provider

The process includes 4 additional interactions between Microservice-provider and Identity and Permission controls. This is an obvious overhead for Microservice performance and the source of additional failures though it is the most accurate protection mechanism and it is shown in the diagram below.

Variations of the Basic Protection Method can be cases where M2 obtains the identity – confirmation token – from the Identity Provider and the access-permission token from the Permission Provider up-front. Then, M2 can submit them to M1, which will need to send them both for verification. The condition of these cases is: if M2 receives a negative response from any of Providers, M2 should not invoke M1 and save M1 time/calculations. The illusive pros here are in that M2 gets tokens up-front and might hope on the reuse of them. The real cons here are in that M2 gets access to the responses from the Providers and can alter them. The tokens can be stolen or even voluntarily passed to someone else who will pretend to be M2 while conducting fraud. From Zero Trust perspective, the up-front obtained tokens cannot be trustful.

Basic Protection Event-based Sub-Method

In this method, the Microservice-requestor M2 interacts with the Microservice-provider M1 via pushing or polling event notifications. The condition here is that the M2’s credentials and requested action should be embedded into the notification. The credentials and required action are encrypted.

The difference to the process of Basic Protection Method is this: before M1 redirects – delegates – the M2’s credentials to its the Identity Provider, M1 has to extract, decrypt and segregate M2’s credentials and requested permissions. Then, the credential and requested permissions are sent to the Identity and Permission Providers respectively for verification. In other words, when M1 gets credentials and requested permissions from the event-notification, the entire basic process is executed.

Closed Optimised Protection Method

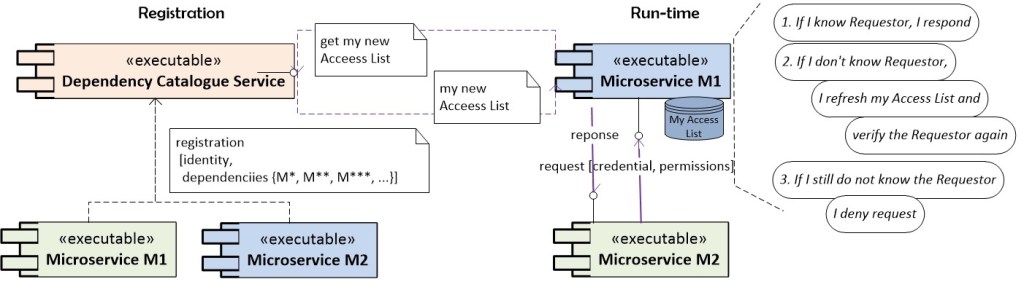

This method works for a special case where Microservices are strictly catalogued and where the deployment of new Microservices is controlled. In contrast to Microservice Architecture that requires maximum isolation and autonomy of Microservices, but does not explain how to compose applications out of Microservices, this method addressed cases where Microservices actively interact.

So, a number of Microservices in an application (as well as in the company) is finite. At the design time, we can know all Microservices that need to interact with, i.e. consume, given Microservice. The Microservice-consumers form a closed collection that can be extended or shrank only via the special procedure. If I change my point of view and look at it from the Microservice-provider’s perspective, I also can find a closed collection of Microservice-consumers now. Such collections are usually of not big size. The interactions can be via API or Event-based, which will be explained separately.

For each application or each corporate division/group/department/LOB, we can create a shared Dependency Catalogue Service that would list every Microservice-consumer and all Microservices-providers that consumers may invoke. To be included, all Microservices should be meticulously reviewed, including ownership, unique µID. For each Microservice-provider, an enumeration of Microservice-consumers forms a Dependency Access List, which can vary from one to another Microservice.

Mentioned special procedure is about conditions on the Microservice deployment. The conditions are:

- A Dependency Catalogue Service should be up-and-running before any Microservice is deployed. Usually, the Dependency Catalogue Service is needed per application and per each business unit that might have more than one Microservice-based application

- As a step of deployment, each Microservice has to be assigned a unique core identity (µID). Each instance (and version) of this Microservice should have its individual identity, which might be derived from the core identity

- Before the Microservice is physically deployed, it has to be registered with the Dependency Catalogue Service. This registration should be meticulous including validation of Microservice owner and its rights to deploy the Microservice in the logical/physical boundaries of a particular application. If a Microservice offers more than one access action, which is possible with gRPC interface as an API, this action has to be explicitly mentioned in association with expected consumers.

At run-time, the consumer’s requests contain encrypted credentials and required permissions to actions. When a newly deployed Microservice, regardless if it is a Functional or Informational Microservice, receives the first request, it should:

- Contact the Dependency Catalogue Service and obtain its individual Dependency Access List prepared by the Dependency Catalogue Service. The Dependency Access List enumerates all other Microservices (their µID) that have registered their needs to work with this Microservice as well as all permitted actions, per Microservice

- Upon receiving the Dependency Access List, persist it in its personal data store; nobody else may access this data store

- Match the requestor’s µID and, possibly, the action requested to the Dependency Access List.

- Execute requested action if the match is positive or deny service otherwise.

The integrity of the list should be protected ( by encryption, or a digest, or a signature, or whatever solution can be used here). This is an additional security precaution in case of an intruder would try to alter this list.

For the following invocations, the Microservice has to obtain the Dependency Access List from its local data store and perform steps 3) and 4) from the above sequence. Depending on the security appetite in the company, the Dependency Access List may be also kept in an online cache to save on interactions with the data store. This model is shown in the diagram below.

Microservices are deployed with no particular order, including new versions. Also, new compositions of Microservices may require new interactions. Therefore, the locally kept Dependency Access List can become outdated at any moment. This is why before responding to the request with denial, the Microservice-provider has to refresh its Dependency Access List by repeating all four steps drawn before. If no match is found again, the request is recognised as illegal and the Microservice or an entity that sent such request is viewed as an intruder with all due reposts, logs and alarms.

In summary, the Closed Optimised Protection Method provides central for the application (at least) Service that preliminary validates identities and access rights of each Microservice in the application regarding other Microservices. At run-time, each Microservice when receives a request validates the legitimacy of this request against locally kept Dependency Access List. The latter can be updated at any moment, when needed, i.e. in a “lazy-refresh” mode.

The overall solution can scale vertically and horizontally on the assumption that the company controls its Microservice development. The performance of this method is better than with the Basic Protection Method. It can be even better than with JWT and OAuth if they are used securely, i.e. after all questions to them are answered.

However, if a Microservice’s API is exposed outside of the corporate boundaries, i.e. we do not have any chances to know who contacts us and on what permission grounds, the Basic Protection Method should be applied with all ‘dances’ around sharing Identity and Permission Providers.

Closed Optimised Event-based Sub-Method

In the event-based interactions, a request from Microservice M2 to the Microservice-provider M1 takes a form of event notification. This works in both push and pull models; an Event Sourcing, depending on implementation, can be categorised as push or pull as well. The notification’s content includes the M2’s credentials and required permissions for actions to be conducted by M1. The credentials and action are encrypted.

Upon obtaining the event notification and before doing anything, M1 extracts, decrypts and segregates M2’s credentials and requested permissions. Then, the same core process described above is executed under the same pre-conditions.

Conclusion

The task addressed in the article is about protecting Microservice’s functionality and resources in the inter-Microservice interactions. Since Microservices are independent functional products, their security should be not weaker than for applications or systems. Known techniques like OpenID Connects, JWT and OAuth2 were reviewed from the perspective of Microservice interactions. It has been found that these techniques cannot be applied as-is to the Microservices either because unanswered questions to them or because of doubtful trustfulness of related workflows.

The article proposes two protective methods for Microservice (or service) that have sensitive functionality and/or resources. While the Basic Protection Method is just a classic identity verification and following access permission control, the method does not require single centralised Identity and Permission Authorities. The latter can be distributed as needed following the distribution of Microservice-based applications. The Closed Optimised Protection Method replaces Identity and Permission Authorities by application-scoped registry calling Dependency Catalogue Service, which itself can be a Microservice and deployed together with the applications. IN this method, each Microservice-provider is supplied by a Dependency Access List of other Microservices that need to interact with it. The Dependency Access List is created via a special dynamic procedure and can be extended beyond the application boundaries; this procedure can be automated at runtime and/or configured offline.

This method uses lazy-initiation & refreshment and competes in performance with handshake-based OpenID-JWT/OAuth2 techniques.