The image is created by Michael Poulin with the help of DeepAI.

The text is written by a human. — AI Humanise Detector.

Part 1.

General-purpose AI Agents & their Risks

Where the EU AI Act has Stumbled

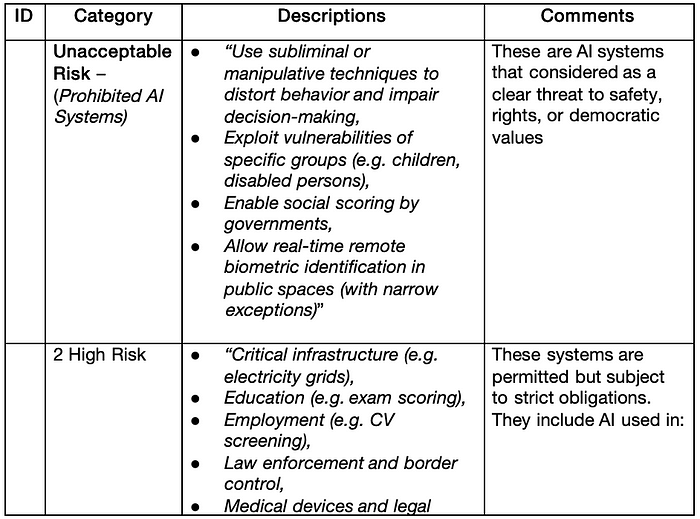

Many AI experts agree that the EU AI Act finally widely accepted in 2025 (with only a couple of exceptions) is the best possible foundation for the human-centric approach to both generative AI (herein after AI) creations, governance and usage. The Act provides a Risk Categorisation for qualifying AI-based products against the risks of harm an AI is capable to cause to a person. Let me outline this — to a person, not to the company, group of people, government or any other entity that can act as a ruler toward a person.

The Risk Categorisation scheme is quite comprehensive and includes 4 risks. The AI-based products must be represented for categorisation and, depending on the assigned category should adhere to certain rules and constraints. It is assumed that the AI-based products, which had not passed the categorisation procedure, would be restricted for usage in the EU Member States. Though the core of the Act was ready in 2023, corresponding legal regulations are still in a progressing development.

Two years is a long time for technology like AI and AI capabilities have reached a new level in 2025 in the form of Agentic AI or AI Agents. ”As Oliver Patel, Enterprise AI Governance Lead at AstraZeneca, notes, “there is no mention of the words ‘agent’ or ‘agentic’ in the EU AI Act, ISO 42001 or the NIST AI Risk Management Framework”. Although agentic AI may technically be covered by the EU AI Act’s existing definition of “AI System” (which appears to have been designed to be broad enough to allow for future developments), the lack of specific reference to such terms highlights that the Act may leave some gaps in this area.“

Apparently, the problem outlined by Mr Patel is quite serious. It leads not only to the challenges for the Agentic AI creators in the E, but also allows speculations aiming to segregate human-centric Act and risks from Agentic AI. In other words, some people take advantage of the situation for unleashing construction of anti-personal, anti-private, anti-freedom AI Agents that may ignore the harm they can cause to people.

In this article, I will discuss the ethical and other types of risks that can exist in an arbitrary system constructed as a combination or composition of general-purpose Agentic AI (GPAI Agent ) entities. My goal is to explain how such system can be viewed or approached from the perspectives of Risk Categorisation in line with the EU AI Act.

Why Agentic AI is Systemically Risky

The major advantage of an Agentic AI is that it is proactive. It can operate in high-risk spheres like healthcare, transport, education, the military, and the production of materials that are highly poisonous for people. However, all mentioned aspects relate to how people might use Agentic AI, not how it works itself.

The EU AI Act sets special requirements for AI-based products that include, among others, Human Oversight (Article 14), Record Keeping (Article 12), Transparency (Article 13), and Technical Robustness and Safety (Article 15). The requirements of transparency and human oversight take part in the UN’s ethical principles for AI as well.

For the context of this article, I enumerate a few capabilities that Agentic AI possesses:

l It works autonomously without human oversight,

l It is driven by the goal rather than context as a regular LLM and makes intermediary decisions on its own,

l It can decide on accessing restricted resources to reach the goal,

l Its intermediary decisions are invisible to people, non-transparent,

as a technical entity, it has no accountability whatsoever.

As a matter of fact, Agentic AI violates all mentioned crucial requirements for humanity and constitutes increasing risks systemically.

Dances around “Leave Agentic AI Alone”

Irresponsible and reckless enthusiasts of the techy view on Agentic AI try to find nonexistent gaps in words to protect Agentic AI realm from human-orientated regulations. Technology still cannot grasp the fact that AI and Agentic AI are not in the scope of pure technical practice because both cross the red line into humanity and people’s society. So, technology has acquired legal power.

Article 3: Definitions of the EU AI Act defines risk as “the combination of the probability of an occurrence of harm and the severity of that harm,” which is a well-known and accepted definition. The Act also defines “12 risks in total divided into four categories:

1. Geopolitical Pressures,

2. Malicious Usage,

3. Environmental, Social, and Ethical Risks, and

4. Privacy and Trust Violations”

with a few exceptions.

The lawyers have concluded that “The classification of agentic AI into a specific risk category under the EU AI Act is contingent upon its contextual application and potential impact on fundamental rights or safety.” Well, very nice words, but do they mean anything valuable? Does “contingent upon its contextual application” mean that depending on the context where an Agentic AI operates, the EU’s risk categorisation may be applied or not? The trap here is that no one knows in which context the Agentic AI really works because it autonomously makes decisions on extending or collapsing the nomenclature of information sources it needs to use to reach the goal.

In other words, this legal statement is “practical” for idiots or for those who want to hide the truth. If applicability of the Risk Categorisation scheme is contingent, it is always possible to refer to non-applicability when the harm caused by the Agentic AI is severe. What the lawyers tried to ignore is that the EU AI Act requires passing categorisation control for all AI. That is, the lawyers should legally prove that Agentic AI contains no AI per se to avoid the categorisation process.

Unfortunately, the lawyers are right on only one point — the EU AI Act must be refined and extended to cover Agentic AI. This will eliminate all tricks as described.

Not Everyone Wants the Best for People

It is not rocket science to know that some people believe that legal regulations are created to prevent some wrongdoing and drive our industrial practice to the “good for people” while others hate regulations because they bring more troubles to those people and disturb them in what they are doing. I can suggest that the latter “makers” probably do something not that good to people; otherwise they would not hate regulations.

This logic leads to two approaches in AI development: when we build something new

1) We preserve existing regulations and gain our income fairly.

2) We search for a workaround existing regulations for the sake of higher immediate income.

“Alex Kirkhope and Paul Nightingale explore some implications agentic AI may raise for current regulations and governance frameworks, focusing particularly on how to reconcile the need for human oversight with the inherent autonomy of the systems”. Since “inherent autonomy of the systems” means an absence of “human oversight,” this reminds us of the plans of Soviet communists to turn river flows backward.

Considering that AI Agents “can be given ‘a high-level…goal and independently take steps to bring it about, through research or work of their own” results only two results: a) Either humans should be excluded “by the rule” that contradicts the purpose of the article, or b) AI Agents should drop this capability, which raises a question whether they would remain agentic after that.

Attempts were pioneered by OpenAI and Google in creating LLMs “that cantake actions on a user’s behalf, and Salesforce has launched its workplace AI agent, which claims to “use advanced reasoning abilities to make decisions and take action” without relying on human engagement” has been reviewed in the book “Married to Deepfake” just because this human instruction-based “advanced reasoning” is the deepfake targeting naive “useful idiots” (by V. Lenin).

A totally different approach to protecting AI/Agentic AI was chosen by the UK Government despite it honestly identifying multiple risks and open questions to the AI frameworks and realisation. They call it a “soft law.”

The term “soft law” incorporates a lot of ambiguity and depends on the one who controls this “law.” For example, someone can call on UN Guidelines on AI and Human Rights, or ISO or IEEE standards, or even Corporate codes of ethics. A soft law refers to something that is non-binding, voluntary, or aspirational, and its applicability is up to the actor. While a market pressure of the new regulation expectations is taken by many as a soft law, and they prepare themselves for it up-front, this concept also widely opens the “doors” for any types and forms of speculations, exploiting gaps in the law and creating negative precedents that could prevent or increase difficulty in setting proper laws.

Officially, the UK has taken a pro-innovation, light-touch approach to AI governance. While it acknowledges the importance of human oversight, it delegates the control over the risks to sector-specific regulators, which can result in serious discrepancy between risk controls in different sectors, and Emphasizes voluntary frameworks and toolkits like the “People Factor” and “Hidden Risks Register”.

Actually, “Agentic AI is clearly on the UK government’s radar. The last government’s 2024 consultation on its “pro-innovation approach to AI regulation” white paper identified “autonomy risks” as one of the key risk areas, and noted “New research on the advancing capabilities of agentic AI demonstrates that we may need to consider potential new measures to address emerging risks as the foundational AI technologies that underpin a range of applications continue to develop” . Nevertheless, “The UK government has consistently (even with the change of administration in 2024) demurred from publishing any overarching legislative framework, ostensibly because it does not wish to “rush to regulate’ and “potentially implement the wrong measures that may insufficiently balance addressing risks and supporting innovation”. The government promises for “appropriate legislation” in 2025, even articulated in the King’s Speech, remain “good wishes.”

Instead, the Prime Minister’s Adviser on AI promotes “safe AI innovation” where whether an innovation is safe or not is decided by unauthorised arbitrary people with no trustworthy risk assessment methodologies, making the whole concept “borderline reckless.” It is not a surprise that Jonathan Zittrain, an American academic, author, and lawyer associated with Harvard University in the US, which is notorious for its left-wing education programs, supports the UK’s wording by saying, “The blinding pace of modern tech [can] make us think that we must choose between free markets and heavy-handed regulation — innovation versus stagnation.” Zitrian fakes the nature of AI regulation, calling it against a free market — in reality, the free market works on the tones of different regulations, and many of them have saved the world economy from stagnation several times. I think that such active resistance to AI regulations has a concealed goal, and it is not about the potential cost of retrofitting.

If a regulation can independently from the provider uncover accidentally or deliberately unaddressed risk, it is not only a reputational hit, but it can block further “innovations” of these risk products and stop penetration in the markets where such regulation is absent, i.e., it is about the loss of future revenue. Unfortunately, this is not the major reason for keeping AI and AI Agents unregulated in front of the non-binding “published 50 recommendations around AI.” This reason is in creating a reality that the leftist (centre-left, though many believe it is just left) Labour Government wants to establish in the industry while setting a room for correction rather than restricting obviously dangerous “innovations.” When the protective humanitarian regulations are inevitably set in power, it will be too late to change many implementations of unregulated AI and AI Agent based products (due to the potential latency, cost, and missed market advantage).

Some people can find insinuations of conspiracy in the presented arguments. Maybe, but it is obvious that the UK’s Agentic AIs won’t be allowed to work in the EU member states in spite of any liberated innovations.

Agentic AI Compliance Problems

To become compliant with the EU AI Act and its Risk Categorisation scheme, an Agentic AI technology should satisfy requirements for identification and categorisation of risks associated with agentic AIs, where the notion of risk is defined in the Act specifically to human healthcare, safety, and personal rights. This significantly differs from how the UN treats the AI Agentic risk. The UN does not have a single unified definition of AI risk but deals with distinct risks identified by the UN bodies (like UNESCO, ITU, OHCHR, and others) that approach AI from various thematic perspectives (human rights, development, ethics, etc.). Overall, the UN insulates the risks to individuals, to society, and to the environment.

When talking about GPAI Agent compliance with the EU AI Act, it is necessary to count in the compliance requirements for the GPAI Agent based products and use them for defining the risk category. Due to the complexity of the GPAI Agent based products or systems, it makes sense to view the EU Risk Categorisation scheme in two steps:

1. Categorisation of the AI Agent based composition where Agentic AI are engaged to work together on a common shared task.

2. Categorisation of an individual AI Agent.

The following sections address each step sequentially.

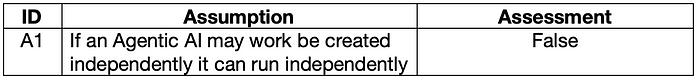

Unrealistic Assumption & Soft Dependency

I start with one common ambiguous belief that can lead to misinformation and enormous extra efforts spent for nothing.

Table 1.

Though an Agentic AI works autonomously, it still uses generative AI elements. People have publicly and agreeably concluded that even creators of AI do not know a priori the logic that an AI would create and utilise at its runtime. So, people cannot know the runtime logic of the AI Agent as well. This constitutes the problem with AI Agent transparency, which we will discuss in the separate section.

The AI Agents are given target tasks in expectation that the internal logic generated at runtime would identify and resolve intermediary sub-tasks. Therefore, the AI Agent is not constrained by such logic that might need extra functionality and data, i.e., the AI Agent can face intermediate resource limitations. These situations are much more likely for the general-purpose AI agents, or GPAI agents, operating in the socio-cultural and political spheres.

An AI Agent may be armed with policies that allow it to look up and reach for additional resources. However, such policies require a relatively complex supplemental environment that deserves a separate article.

In this work, we assume that GPAI Agents may and can interact with resources that were not initially identified and provided for the GPAI Agent. The theory of risk estimation for composite systems has reserved a special term, “weight of interaction,” which is a scaling factor that quantifies how strongly the risks of two components influence each other when they interact.

The non-anticipated communications and interactions in the risk theory for composite systems are accepted as a dynamic soft dependency. Due to the absence of up-front knowledge for the internal runtime logic of the GPAI Agents, all values of the “weight of interaction” are either unknown or empirical. I’ve suggested that thanks to the independent creation, the reasonable values of “weight of interaction” may be in the range from 0.1 to 0.5.

Thus, GPAI Agents may not be considered totally autonomous because their intercommunication is not prohibited by this technology. Instead, we can accept a feature of autonomy for GPAI Agents only in the sense of independent activities from the human oversight.

This line of logic leads me to two more options:

1) Inter-GPAI Agents and resource communications in the GPAI Agents-based composition owned and controlled by humans or legal entities.

2) Inter-GPAI Agents and resource communications outside of the boundaries of the GPAI Agent based composition.

Both options will be discussed in the following sections.

Broken Backward Compatibility

From the time of regular stand-alone generative GPAI, people have used it to refer to and define the AI context. As it was specified for standardised SOA, the AI context is seen as a set of external execution variable conditions that can influence the AI agent’s behaviour during the resolution of a predefined overall goal and can be applied for each particular run or interaction of the AI Agent instance.

The context can include both business and technology aspects. The execution context for stand-alone GPAI and Agentic GPAI differs in wider scope, higher scalability, and new content. However, the aspects of the context for GPAI Agents could still be used for the stand-alone GPAIs. For instance, the context for Agentic GPAI may include:

* l Input from the environment or user

* References to the legal rules and regulation statements existing in the current jurisdiction and impacting the business that the goal relates

* Task-specific constraints defined via policies

* Time or resource limitations defined via policies

* Any ephemeral states defined via policies.

The execution context is important for every Agentic AI and it is still manageable for stand-alone GPAI agents. In the compositions, the human control over several context aspects is getting lost with the increasing of the complexity of the composition. By complexity, I understand not only the number of involved independent GPI Agents but also an increase of soft dependency between them.

An additional problem for GPAI Agents relates to a potential inevitable conflict between the overall goal and the applied policies. In this case, policies work like rules in the well-known past rule-based engines and solutions. Particularly, the more rules a business wanted to set, the higher the risk of lost integrity appeared across the whole rule set. The popular saying of that time joked, “If your engine has two or more rules, any one of these rules can be worked around using other rules.”

Therefore, an inadequate design of an individual Agentic GEAI and an even more incompetent design of a composite Agentic AI-based solution can easily lead to situations where policies or their combination prevent the Agentic AIs from resolving the set goal. In a chess game, this is known as an end-play — the runtime execution may change neither the goal nor the policies.

Even if an endgame had been avoided, the losing of direct (human) context management for each Agentic GPAI leads to more chances that the expected goal would not be reached or the outcome would be incomprehensible, confusing, and even incorrect.

Attempts to regulate the internal processing of individual GPAI Agents or their composition via policies raise another risk described above.

Categorisation of the GPAI Agent Composition

I have started with a composition of Agentic GPAI rather than a simpler stand-alone GPAI Agent to avoid extremely popular misconceptions spread over IT.

Within this article, a GPAI Agent Composition is understood as a coordinated assembly of GPAI Agents that interact and operate collectively to achieve a shared objective or goal or solve a common task, exploiting their individual capabilities. This amplifies the Google’s “Agent composition — or “stitching together’ multiple specialized agents to achieve a complex goal — is a cornerstone of the Agentic AI vision”, which stresses coordinated orchestration across independent agents, while no particular coordination is preset in this article.

A lot of less competent people believe that if they tried “small” and succeeded, they may extend this experience as-is on the “big.” Apparently, many lessons, conclusions, methods, and even tools do not work for “big” properly or, at least, the same way as for “small.”

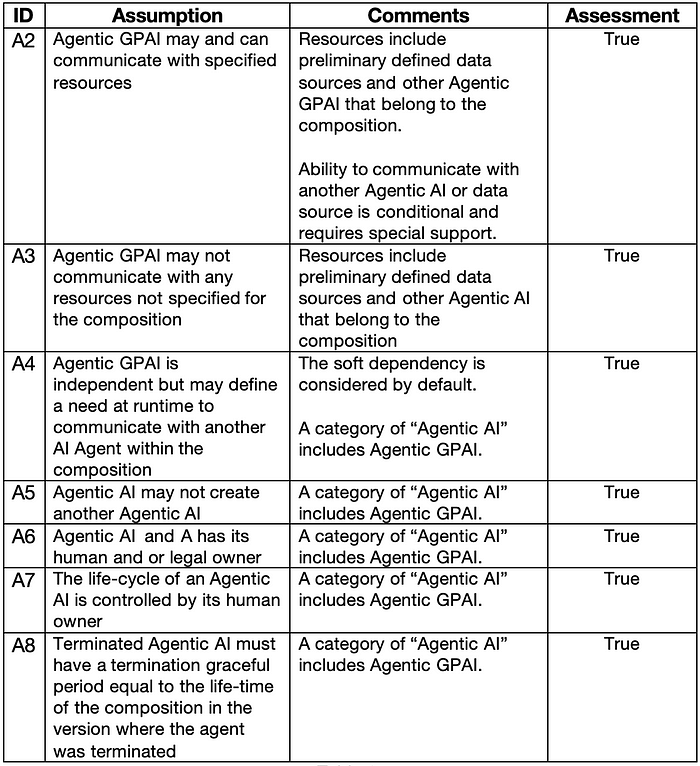

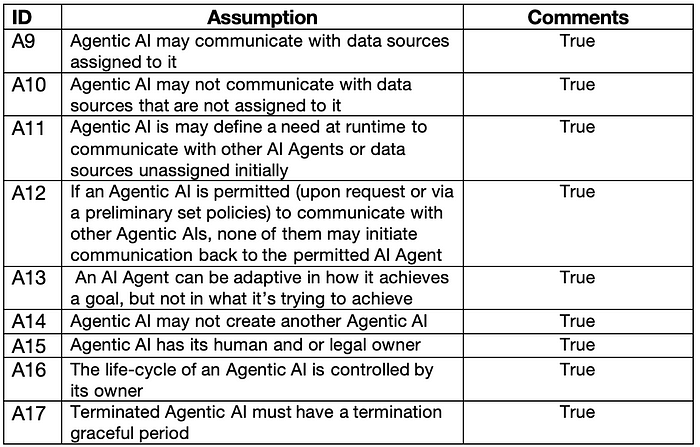

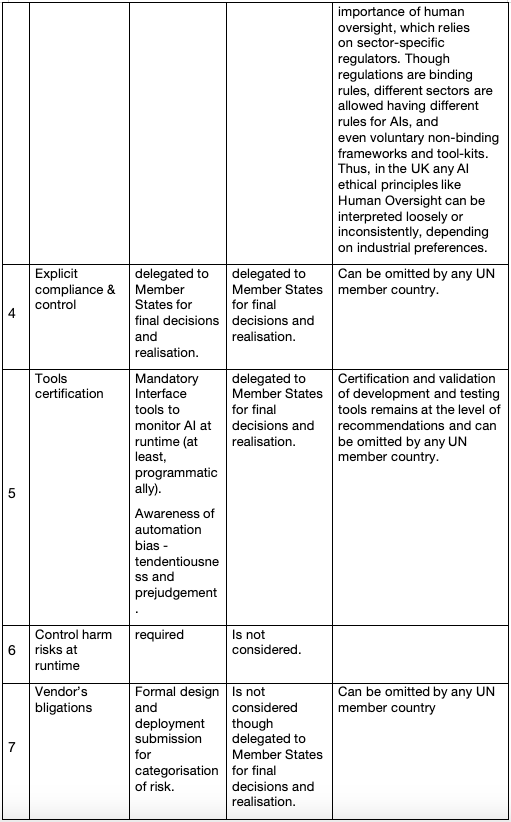

So, I have to make a bit more assumptions in addition to A1. They are listed in Table 2.

Table 2.

Assessment of Composite Risk

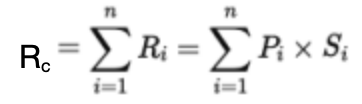

According to the Article 3: Definitions of the EU AI Act and in line with made assumptions, the risks of each GPAI Agent “i” (GPAI Agenti) in the composition is described with severity Si (impact) and probability Pi(likelihood) that are scaled by the “weight of interaction” factor. — So, the risk Ri can be defined as:

Ri = Pi×Si ,

where Pi is estimated empirically in testing Si is defined based on the subject that the GPAI Agenti addresses (may be documented and precofigured).

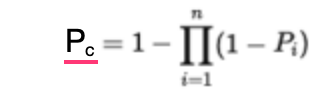

If there are no possible interactions between GPAI Agents in the composition, the composite risk probability Pc is

where “n” is the number of agents in the composition.

The total risk of the composition Rc is:

If there are possible interactions between GPAI Agents in the composition, the calculations are more complex and can even use Bayesian algorithms. I have considered the more difficult case where each GPAI Agent has its own severity, probability and can interact with others, i.e. the GPAI Agent “i” has Si , Pi and wij — the interaction weight between GPAI Agenti and GPAI Agentj. A meaning that Agents can interact with each other is understood with both impacts 1) the input to agentj can be generated buy agenti and vice versa, and 2) the materialisation of the risk of agentj can impact the behaviour of the agenti and vice versa.

The total severity of the composition Sc can be expressed as:

where the first sum represents the composite risk with no interactions and the second sum represents the composite risk accounted for the interaction effects between agents.

Several simulations of composite risk estimations have been done using arbitrary randomly selected values for Si , Pi and wij . Mimicking the EU’s Risk Categorisation scheme, only 4 categories of risk values were high risk = 4, limited risk, = 3, minimal risk = 2, systemic risk = 1 and any risk that exceeded a 4.1 value was considered prohibited, i.e. related composition may not be allowed to operate in the market.

Due to unavoidable systemic risk that can contribute soft dependencies, the “interaction weights” were defined in the range from 0.1 to 0.5. The risk of interaction depends on the average risk of the two interacting agents and the interaction weight.

Upon calculating the Total Base Risk and the Total Interaction Risk, the Total Raw Risk was normalised to the risk range [1–4]. Because of this range, the following intermediary calculations were performed:

* Rraw = Raw Total Risk=Total Base Risk+Total Interaction Risk

* Min Base Risk

* Min Interaction Risk

* Rmin = Min Raw Risk = Min Base Risk + Min Interaction Risk

* Max Base Risk,

* Max Interaction Risk (assume all interactions between agents are with maximum risk),

* Rmax = Max Raw Risk= Max Base Risk + Total Max Interaction Risk

and the result is

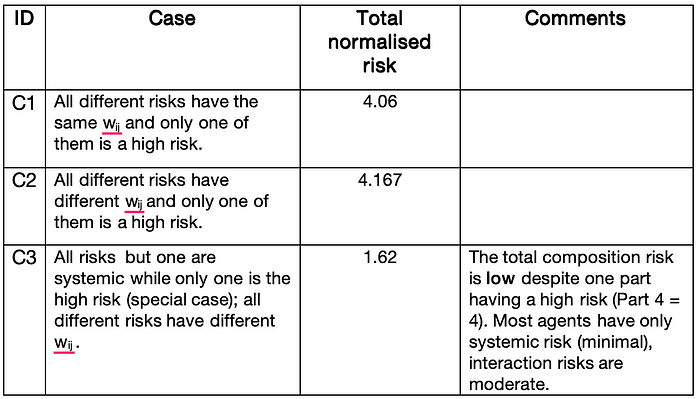

A few variants of calculations for the same risks (probability and severity) collection and varying “interaction weights” as well as for the special case of risks are provided in Table 3.

Table 3.

Provided result and the calculated one. The impact of one Agentic GPAI that has a high risk had been “compensated” in the used formulas, while its effect on the person remains unknown. Thus, without investigation of the nature of the activities of this GPAI Agent and their influence on the total final outcome, we cannot categorise the composition accurately.

This result leads me to the conclusion that the EU’s Risk Categorisation scheme is incomplete. Particularly, it required at least one interaction of reassessment based on the mentioned investigation’s outcome. The related risk may be named “Irresolute.”

Categorisation of a Stand-alone GPAI Agent

Below are several assumptions following A1 that I believe are quite realistic for a stand-alone GPI Agent:

Table 4.

Any GPAI Agent is capable of internally planning the flow of actions and orchestrating it with the single purpose — to resolve the overall goal or task. The planned actions (steps) are unknown to the owner or an executor of the agent. Such a plan can include desirable interactions with own resource. In this case, the GPAI Agent has two choices:

1) Self-terminate its work.

2) Engage the preliminary defined policies that allow additional external communications.

Reviewing the second choice, I have to note that the available policies may allow communications that the agent’s plan does not need when some needed ones are not permitted, i.e. the agent is turned to the first choice. The GenAI Agent can try to adopt the plan and try to resolve the goal in a different way. However, this capability does not guarantee the resolution either.

If particular communication is demanded and permitted, a special execution environment should be provided. This environment can be described in a separate article. In contrast to operations within the composition, the stand-alone GPAI Agent may not communicate with other GPAI Agents; otherwise, it would constitute a soft dependency and transform the case into the composite risk calculation described in another section already.

As I mentioned earlier, the risk for a GenAI Agent is a function of the probability and severity of the risk materialisation. A non-zero risk probability if turned up does not necessarily result in a failure of gaining the overall goal.

The risk of an individual GPAI Agent can be identified by comparing its legally defined target domain of operations with the description of each category in the Risk Categorisation scheme of the EA AI Act.

In case the GPAI Agent fits in more than one category, the most severe risk is assigned to the agent. Policy In case the GPAI Agent fits in no one category, the agent should be ranked as “Irresolute.”

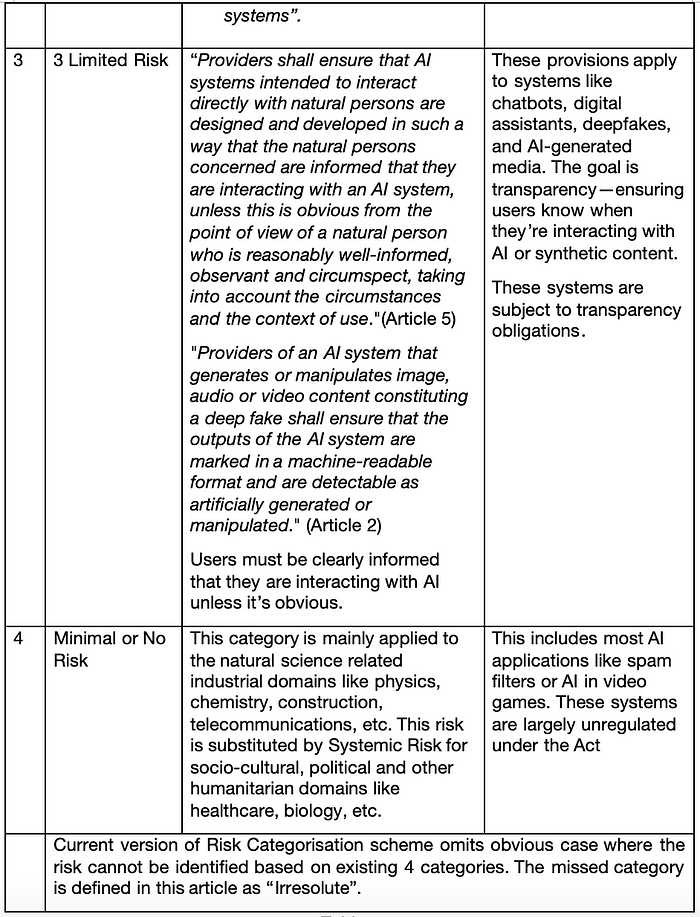

Following is the extract from the EU AI Act that describes all defined categories:

Table 5.

The intrinsic weakness of the EU’s Risk Categorisation scheme is in the textual description of each category. Any description has the potential to miss certain types of activities or industrial applicability and allow a hole. Only meticulous legal wording can cover such a hole, but it may create challenges for non-lawyers to understand categories.

Special Case of Systemic Risk for Stand-Alone GPAI Agent

An assessment of a risk for a stand-alone GPAI Gent is provided at the beginning of the section Assessment of Composite Risk.

Apparently, the EU’s Risk Categorisation scheme specifies an additional cumbersome systemic risk. I call it this way because the risk’s name carries a notion of unavoidable fundamental concern for the AI technology that many prefer to silence. As any technology, especially working across the boundary of humanity, has its natural negative aspects. If we do not talk about them, we are positioned for making mistakes, and this relates not only to “building skyscrapers” but to human beings, first of all.

The EU’s Risk Categorisation scheme recognises “General-purpose AI models with systemic risks,” which “are defined as models that, by virtue of their capabilities, scale, or impact, pose a risk to public health, safety, personal fundamental rights, or the functioning of the internal market.” “Providers of models with systemic risks are obliged to assess and mitigate risks, report serious incidents, conduct state-of-the-art tests and model evaluations and ensure cybersecurity of their models.” I think that the members of the EU Committee worried that the required control for this risk category may be too harsh for the GPAI creators and cause adoption resistance. So, the EU Committee defined the case as a class of GPAIs free from systemic risks (which is fallacious). Moreover, trying to prevent the resistance, this risk for GPAI was attributed to states where GPAI could be considered powerful enough to pose broad societal or economic threats. The latter includes an internal contradiction with the essential EU’s human-centric concept, which I will discuss later on.

I see the problem with Systemic Risk in its unjustified generalisation, on the one hand, and in the too general nature of GPAI itself. There were intensive debates around the ethical, practical, and classification issues concerning GPAI and System Risk helped to uncover some areas in human society life where Systemic Risk for GPAI is in high demand.

Let me probe a bit deeper into this problem.

The constraint of classification is that it must take place before the GPAI is permitted to operate in the market. So, the usage of the GPAI is unknown a priori, and the impact cannot be assessed up-front; it can be assessed only post-factum. However, the current EU thinking pattern about GPAI is based on impact, i.e on risk-as-impact, while I argue it should be a risk-as-possibility. A classification based on impact sounds unrealistic and useless — it allows the risk to materialise and harm to be done before it is classified, and related controls should be applied, i.e. classifying the GPAI Systemic Risk becomes useless.

It is known that the EU AI Act anticipates that Systemic Risk can be identified a priori, but this identification is based on not strongly consistent indicators like

* Model size or training scale, which apparently has nothing to do with the ‘capabilities’ of the model algorithms, ‘scale’ in use, and ‘impact’ on the users since size does not dictate any particular use or broad societal or economic threat

* Potential for a broad deployment, which may be questionable initially and result in removing the Systemic Risk from the concrete classification procedure.

Every use of GPAI (and GPAI Agent) in the humanitarian areas possesses a systemic risk because

1) during runtime in the real-world environment, the GPAI possesses a capability to interpret data, which has no guaranteed control of this truthfulness in the mentioned areas. So, neither the data can be reliably verified online (thanks to bots and political propaganda) nor does the operational data have statistical similarity to the training data, making the LLM implanted with mistakes

2) the very fact of having a general purpose for such AI/AgenticAI denotes that its scale starts with a single person and only then may or may not escalate to the society level depending on the semantics of the task for the AI/AgenticAI. So, the scale for GPAI in humanitarian areas should be quite high by definition

3) Every GPAI used by a person has a non-zero probability of negative impact. The latter is based on the quality of data described above as well as on the fact that bias, fairness, and harm are subjective ethical matters, i.e., the probability of them in personal acceptance of the AI outcome is, at least, 50%. So, before the GPAI/GPAI Agent may be released to market, there are no doubts of personal impacts, positive or negative.

Discussed qualifiers for applying System Risk to every GPAI in humanitarian areas based on the general AI principle of human-centricity accepted by the EU. The earlier mentioned intrinsic contradiction in the current EU’s Risk Categorisation scheme is in the omission of the human-centricity of GPAI for the assessment of the Systemic Risk. If this general centricity is considered, all aforementioned qualifiers for applying Systemic Risk become trivial.

Thus, the socio-cultural, political, and, in general, humanitarian areas of people’s lives make this a special case where the Systemic Risk preventive controls become mandatory for all GPAI creators and compliance organisations. Otherwise, the EU AI Act would become indistinct from the ruler-centric AI concept that ignores individuals for the sake of rulers or governments.

Overall, I argue that

if a generative AI-based product has the capacity to cause widespread misinformation, bias, or harm, particularly in the socio-cultural-political or humanitarian contexts — it incurs systemic-risk classification regardless of whether a harm has yet occurred.

In essence, this is a “Contextual Precautionary Governance Model.”

Still, the challenge of how to regulate unknown effects on people remains. Many argue that being too restrictive can depreciate innovations, as I mentioned earlier regarding the UK government. While this concern is valid, it is limited in scope. To justify it, one should analyse the nature of the innovations and domains where they are assumed to be applied. In this case, the domain is different aspects of humanitarian activities, which unconditionally means that any innovations that have the potential to harm someone must be assessed and categorised before they may be realised. Categorisation is not the same as prohibition and requires time, effort, and funding.

I try to flip the logic of the EU AI Act’s approach to the AI regulation that is asking, “Can we prove this AI is dangerous at scale?” and replace it with saying, “We cannot guarantee safety in humanitarian contexts; therefore, we must presume risk” because this subject is highly sensitive.”

I am of the vision that the EU needs a brand of Contextual Risk Frameworks specialised in the humanitarian domains of human society. They would work in addition to the general purpose ones. This brand can be overly focused on prevention of risks to people rather than on preposterous risk mitigation.

Finally, it is well understandable that an implementation of my proposals — model, procedure, governance, and framework — can seriously affect current policies linked to automatic risk categorisation, and this is not easy. Nevertheless, these proposals are basic for realising the EU’s declaration of “ethics by design” promoting a human-centric ethical system for the AI industry.

Part 2.

Human Oversight, Transparency, Record keeping, Safety

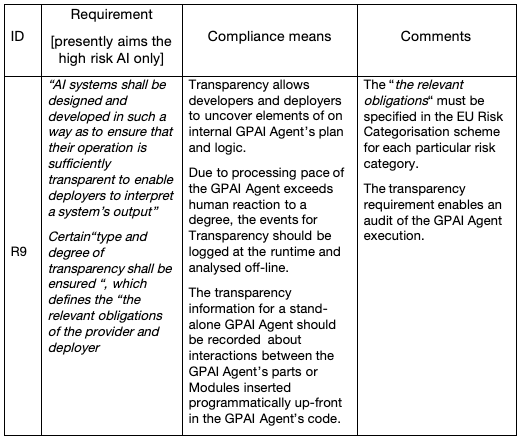

Compliance with the EU AI Act includes not only assessment of the potential harm risks a particular GPAI/Agent can cause to people and societies. The second mandatory part of this conformity includes demonstration of the AGP/Agent based solution to deliver Human Oversight, Transparency, record keeping, Accountability and Safety. I split these tasks in two — one for a stand-alone GPAI/agent and another for the composition of GPAI/Agents.

State of Requirements for Human Oversight

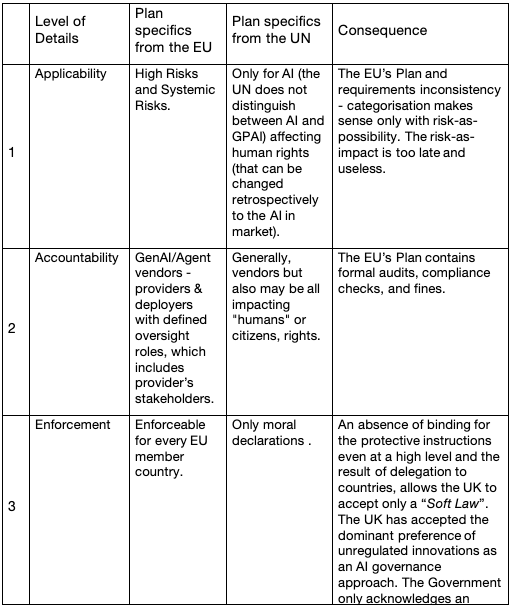

The current state of requirements for Human Oversight is represented by two orthogonal approaches — from the EU and from the UN/UK.

While ethical AI principles and frameworks from both organisations mention Human Oversight the major difference between them is

- The EU has declared the ethical AI principle of Human Oversight and, in the EU AI Act, specified actionable requirements for the control over this principle’s implementation that are legally binding and enforceable. The EU AI Act mandates actionable and enforceable requirements for high-risk AI. It should be noticed that the current version of the EU’ Risk Categorisation scheme has a gap in the part of applicability and specifies requirements for the high risks only. This is caused by the misconception of the harm risk linked by the EU to the impact on the consumer and omitting the major audience of GPAI, i.e. individual people rather than groups or societies. The details of the requirements are discussed in the following sections.

- The UN/UNESCO: in contrast, only declare the high-level ethical AI principle of Human Oversight. They focus on the notorious Human Rights rather than on the protection of people because the former are just non-binding guidelines.

The following Table 6 summarises the differences in the “master plans” for Human Oversight.

Table 6.

An absence of binding for the protective instructions even at a high level and the result of delegation to countries, allows the UK to accept only a “Soft Law”. The UK has accepted the dominant preference of unregulated innovations as an AI governance approach. The Government only acknowledges an importance of human oversight, which relies on sector-specific regulators. Though regulations are binding rules, different sectors are allowed having different rules for AIs, and even voluntary non-binding frameworks and tool-kits. Thus, in the UK any AI ethical principles like Human Oversight can be interpreted loosely or inconsistently, depending on industrial preferences.

Conformity for a stand-alone GPAI/Agent

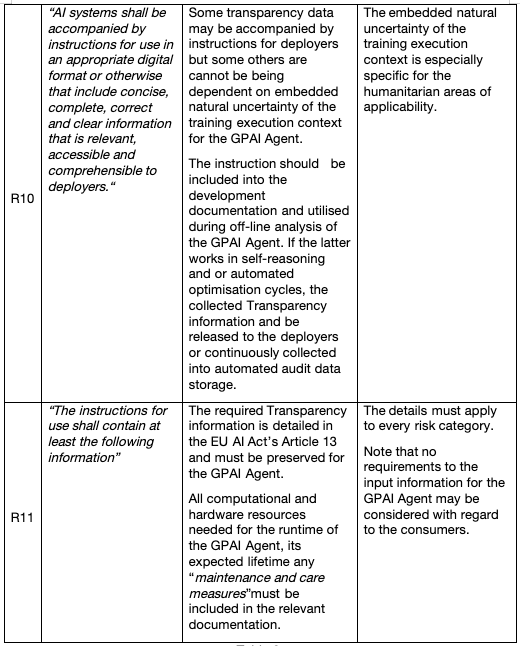

Hereinafter, I will mark the incomplete (applicability to high risk only) or inconsistent (categorisation post-factum risk-as-impact) statements articulated in the EU AI Act with a comment within square bracket like “[…]”.

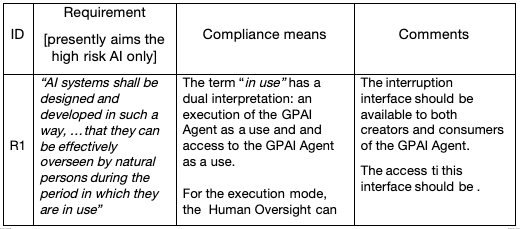

1. Human Oversight

The Accountability requirement to a GenAI Agent is specified in Table… The EU wants to institutionalise Accountability. This should exclude any attempts to assign accountability to the GP Agent or to the consumer (user). The principle of serviceability, i.e. where the consumer is always right and unlimited in using the GPAI Agent in any way prevails.

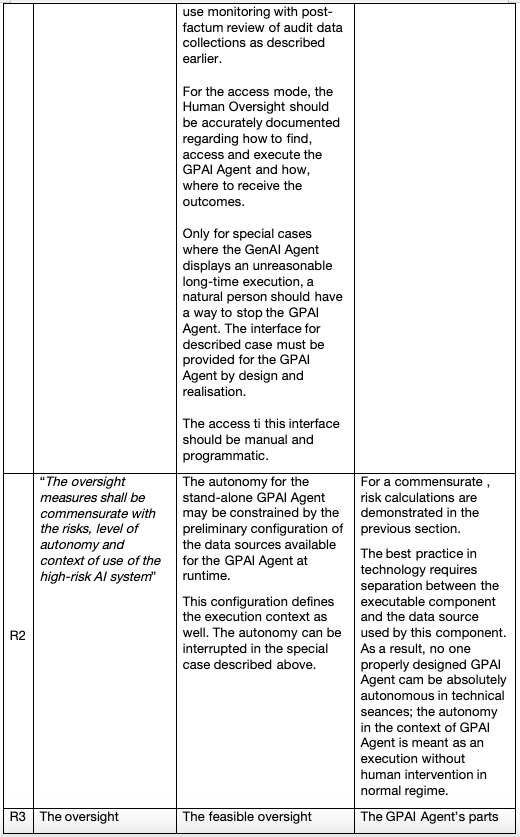

The pace with which performance of the GPAI execution growing draw seriously doubts in that the concept of Human Oversight can be reasonably applied to the execution of a GPIA /Agent for the purpose of inspection and real-time control. As current practice demonstrates, human reaction to the information is much slower than the speed of GPAI processing already, real-time human oversight of the internal work of a GPAI Agent is not feasible.

The GPAI/Agent code should be developed in the way that enable debugging during development and deployment in any case because debugging is a common regular phase of development and does not require any spacial regulations. So, the only option a natural person can have for the Human Oversight at the GPAI/Agent runtime is automated monitoring and gathering an audit data. The audit events can be deliberately inserted in the GPAI Agent code up-front or, in the future, automatically injected based on the special policies and execution context state.The human analysis of the audit data can be performed only afterwords, off-line.

The EU AI Act’s Article 14 on Human Oversight requirements explains, “The goal of human oversight is to prevent or minimize risks to health, safety, or fundamental rights that may arise from using” AI-based systems.

Table 7.

The oversight must be ”proportionate to the risk”. That is, during the categorisation procedure, the represented capabilities, oversight measures, and methods may be seen as adequate or incomplete or inconsistent, i.e., i.e.more oversight controls may be required.

The Human Oversight requirements try to balance between the feasibility of the oversight and the risk of materialisation. Currently, the EU’s law still requires systems, including the GPAI Agent, to provide conceptual “hooks” for human oversight. This is explained as a need for accountability, auditability, and legal structure. While accountability and auditability are covered, only legal structure needs addressing. The situation may be envisioned like a modern ocean liner requiring sails just in case it runs out of fuel. The described requirements realistically identify the cases where human oversight is helpful or useless. A person might have no physical capability to act lawfully if the law is inadequate to the technology in use.

The Human Oversight requirements are overarching for delivering a human-centric ethical GPAI Agent. They define the scope and directions for the Transparency, Record Keeping, and Safety requirements.

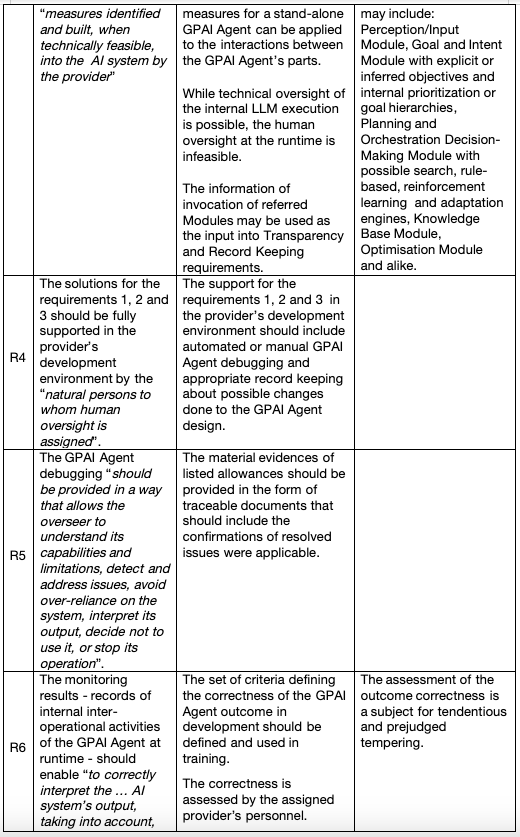

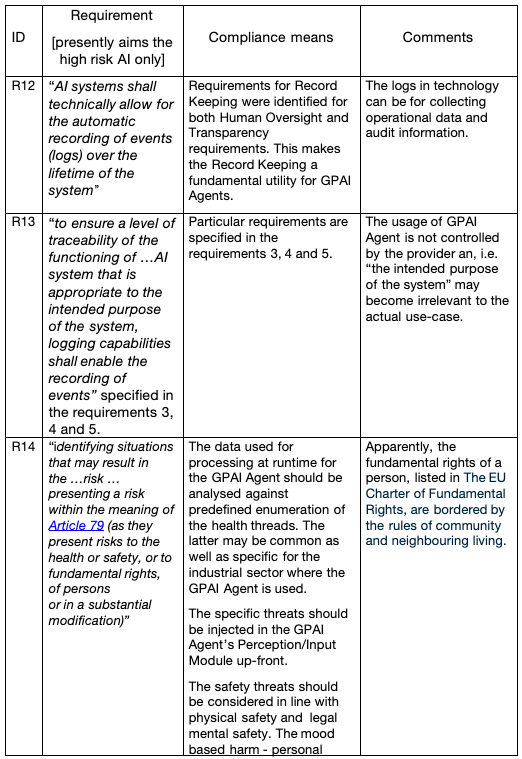

2. Transparency

An intent of Transparency requirements, in addition to enabling the Human Oversight requirements, is to ensure that the deployers and accountable stakeholders can see, interpret, and explain the GPAI Agent outcome. Also, the Transparency information can help the creators to comprehend the internal work of the GPAI Agent, though many famous analysts and researchers lean to the conclusion that real understanding of the internal GPAI logic is unreachable and does not make practical sense but incurs a high price tag.

An interpretation of a GPAI outcome made by people can be tendentious and error-prone. The left-wing’s fraudulent initiative that a GPAI/GPAI agent can be taught to hide the truth and promote socialistic ideas under the cover of “personal safety” works against people and society.

The Transparency requirements are specified in the EU AI Act’s Article 13.

Table 8.

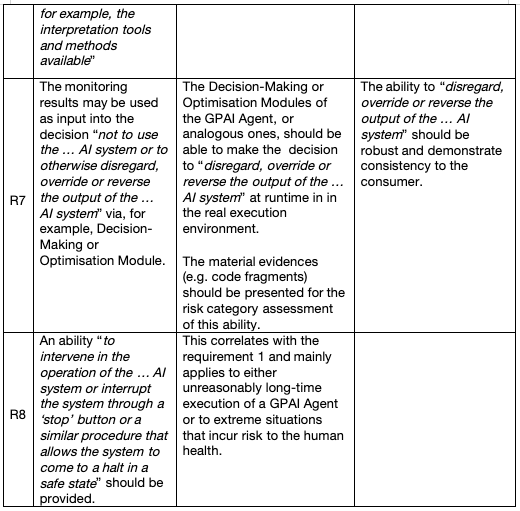

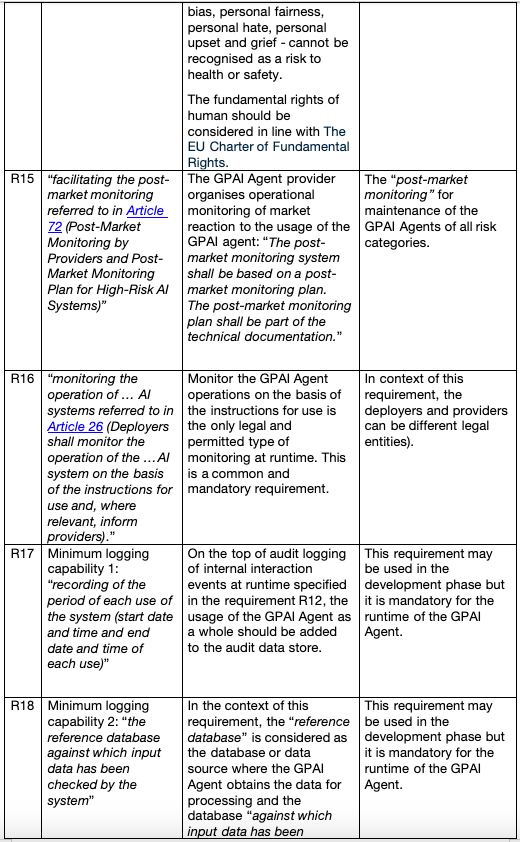

2. Record Keeping

This class of requirements, as well as the ones specified in the recording for the Human Oversight and Transparency requirements, demands a coupling of a GPAI Agent with its own or a dedicated audit data store. This is similar to the solution used for the Microservices and their personal databases. The GPAI Agent’s audit data store may be a hierarchical database where each “node” represents an audit record or document. The concept relationships in this database are represented by the time of the recorded event. The related examples include IBM’s IMS, Windows Registry, and file systems like NTFS.

Table 9.

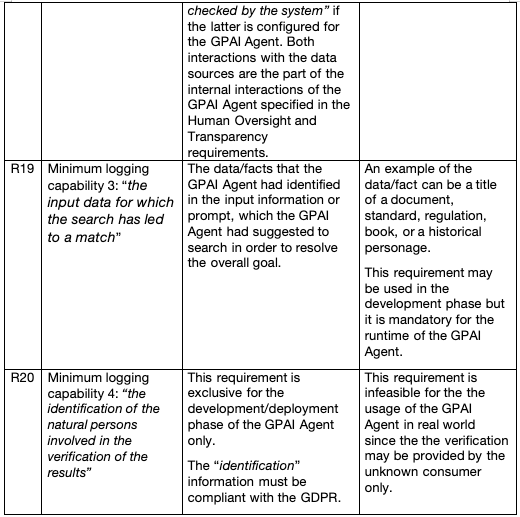

3. Safety

The EU AI Act in Article 9 requires a Risk Management System only for a high-risk GPAI. Since the risk that a GPAI can cause depends not only on the industrial sector where the GPAI can be applied but also on the data in its execution context, categorising an arbitrary GPAI and GPAI Agent based on impact, i.e. post-factum, is infeasible.

Consequently, “identifying potential harms to health, safety, and fundamental rights” should be performed before a GPAI Agent is used. However, the EU AI Act does not define “safety” but instead defines (in Article 3) and requires “a component of a product or of an AI system which fulfils a safety function… the failure or malfunctioning of which endangers the health and safety of persons or property.”

The human-centric ethical AI principles point to the following aspects of human safety:

1) physical safety with regard to physical systems that use the AI or the GPAI Agent

2) mental safety with regard to direct advisory for physical self-harm or physical harm to others

3) safety from direct advisory for a physical harm to the environment.

At the same time, the GPAI Agent should avoid considering artificial extension of “harm” into the emotional sphere of the consumer. Particularly, such concepts as bias, fairness, hate, offence, humiliation, shaming, belittling, insults, exclusion or social rejection, confusion, anxiety, loss of trust in own or one’s own judgement, depression, reduced self-esteem, embarrassment, public comments targeting someone’s identity or appearance or beliefs, harassment, distress, suicidal ideation, discrimination, degrading stereotyping, helplessness, indignity, and frustration are naturally subjective, i.e. cannot be used as the criteria for safety unless explicitly qualified as such by local laws.

Even this incomplete list of “emotional harm” signals that if applied in GPAI or GPAI Agents prohibits normal human opinions, criticism, teaching, assessment of personal behaviour or competence or misconduct, and may be unacceptable in several socio-cultural assemblies. The only decision-maker over the content ethics is the consumer with personal ethical norms and circumstances.

An ethical judgement over the GPAI Agent may be made only at the point of human interpretation of the outcome — not be hardcoded or pre-programmed into the GPAI Agent’s logic, training data, or governance structures without the legal obligation.

This challenges much of the current UN’s promoted “AI safety and alignment thinking” like

- ethics can be formalised (formal moral authority)

- a single ethical baseline (often Western liberal democratic) can be embedded in all AI

- users should be protected from exposure to ethically “risky” content, even if they disagree.

The goal of this “safety” is realising the WEF left-wing’s fraudulent directives and pushing leftist propaganda to obfuscate the life truth and promote socialistic ideas under the cover of “personal safety,” i.e. to enslave the humanitarian society.

The contra-arguments for “safety” in line with ethical human centricity for people in society can be voiced as:

Due to the tremendous variety of ethical systems in the world — in different countries, regions, cultures, and social groups — ethics not only cannot but also may not be formalised without erasing socio-cultural diversity.

The same variety of ethical systems might be embedded in any AI rather than a single ethical baseline of liberal “democracy” that leads to the neo-Marxist ideology.

Only users/consumers decide what is ethically “risky” to them, not any external formal authority or leadership, and may deny the outcome as they wish.

The safety of a GPAI Agent requires that the outcome remain open for interpretations by the consumer and free from embedded moral authority guidelines. Any attempt to predefine ethics in the GPAI Agent outcome, like censor facts, restrict access to meaning, or enforce “protective” moral filters, transforms the GPAI Agent into a coercive instrument of control. Then, everything is dependent on whose hands this control ends up in. Ethical GPAI Agent is not about safe answers — it’s about preserving the consumer’s right to determine what matters.

Conformity for a Composition of GPAI/Agents

A conformity of the GPAI Agent composition with the EU AI Act is defined on the tip of the related conformity of each included GPAI Agent. As I described in the section “Categorisation of the GPAI Agent-Based Composition,” there are interrelationships and interactions between involved GPAI Agents within the composition that contribute to conformity.

The GPAI Agents may interact with each other and resources configured for the composition. So, the GPAI Agents in the composition may have soft dependencies.

An involvement of external resources — other GPAI Agents and data sources — extends the conformity requirements on those resources. Unfortunately, the external GPAI Agents may be under the control of different providers and appear non-compliant with the EU AI Act. This results in the whole composition becoming non-compliant.

The recording of the interaction information between a pair of GPAI Agents over all interactions forms the audit logging for the purpose of Human Oversight, transparency, and composition record keeping.

Due to a need for external resources that can be identified during the GPAI Agent’s runtime, the GPAI Agent composition creators should consider the design and realisation of one of two options: an identified need in the external resource

1) immediately stops the work of the composition as a fatal condition

2) Involves an “access control mechanism”, which can either permit the interaction with the requested resource or deny it and turn the state of the work into option 1).

These options can be configured for a GPAI Agent via runtime policies.

The Authorisation Control Mechanism (ACM) is not trivial and requires a separate article. In the case of invocation of the ACM, the conformity with the EU AI Act for the external resources becomes the duty of the ACM.

That is, the security, consistency, and safety of the work of the GPAI Agent composition should be compliant with the well-known security principle of Least Privileges. This principle may be applied within the composition, but for external communications it is mandatory.

The interactions within the composition should be enabled by preliminary designed and implemented interfaces between the GPAI Agents. The complexity of these interfaces may vary, but no assumption may be made up-front for the cases of soft dependencies. If two or more functional links are identified in the design, this eliminates the agent autonomy. So, a GPAI Agent composition may need a composition-scoped “universal” interface for the composition members.

The GPAI Agent that initiates the interaction acts as a consumer. Therefore, it should be settled in the position of decision-maker over the outcome of the invoked GPAI Agent. In other words, the interaction initiator makes a runtime decision on how to use the received outcome and what to do next.

The act of interaction should be monitored, and interface-specific information should be persisted in the audit data store of the composition. In addition to the nomenclature of data for the audit record specified for the stand-alone GPAI Agent, the audit logging should provide, at a minimum:

- a GPAI Agent’s identity of the initiator and responder

- the timestamp of the request was done, and the timestamp when the response is received, i.e. the time discrepancy between the hardware where the interacting GPAI Agents reside, is excluded.

- the information sent and received in the interaction where feasible

- an indication of whether it is an internal or external interaction.

All interaction-related information listed above applies to the communication with the ACM, if involved.

A cooperative work of the GPAI Agent composition can add the risks to human safety that are not presented in any of the involved individual GPAI Agents. This risk includes a few factors, for instance:

1. Fast continuous impact of the GPAI Agents invoked in a chain that reduces efficiency of mitigation means that could be effective for a stand-alone GPAI Agent.

2. Multiple interactions within the composition statistically increase the chance of failure and related misbehaviour of the composition.

3. Potential extension of the composition for interaction with external resources creates an additional significant dependency on the AMC infrastructure, uncontrolled foreign GPAI Agents and data sources, as well as on unsure compliance of external resources with the EU AI Act.

All listed factors increase personal and human group’s risk of physical and mental harm that a composition of the GPAI Agents can cause. So, a simple grouping of stand-alone GPAI Agents with permissions for a soft dependency cannot be compliant with the EU AI Act regulations. The purposeful design, coordination with AMC, risk controls, robustness and resilience means, testing, monitoring and alarming solutions are necessary for using a composition of GPAI Agents in compliance with the EU AI Act.

Enforcement Measures

The EU AI Act includes a robust enforcement system of measures with fines for different violations of the Act’s regulations. For example:

- Penalties, Article 99:

“the AI practices referred to in Article 5 (Prohibited AI Practices) shall be subject to administrative fines.”

- Administrative Fines on Union Institutions, Bodies, Offices and Agencies, Article 100

- Fines for Providers of General-Purpose AI Models, Article 101

lProcedure for Dealing with AI Systems Classified by the Provider, Article 80

“1. Where a market surveillance authority has sufficient reason to consider that an AI system classified by the provider as non-high-risk pursuant to Article 6(3) is indeed high-risk, the market surveillance authority shall carry out an evaluation of the AI system concerned.

2. Where, in the course of that evaluation, the market surveillance authority finds that the AI system concerned is high-risk, it shall without undue delay require the relevant provider to take all necessary actions to bring the AI system into compliance with the requirements and obligations laid down in this Regulation, as well as take appropriate corrective action within a period the market surveillance authority may prescribe” [presently aims at high-risk AI only]. Apparently, these statements should be extended to cover all categories of risks.

3. Where the market surveillance authority considers that the use of the AI system concerned is not restricted to its national territory, it shall inform the Commission and the other Member States without undue delay of the results of the evaluation and of the actions which it has required the provider to take”. It is perceived that GPAI Agents operating in humanitarian spheres will be used across national boundaries (at least due to the EU’s “freedom of movement”), which leads to a necessity of the market surveillance authorities in all Member States to apply the risk-as-possibility categorisation model to all GPAI Agents regardless of the results of the assessment conducted by the provider”.

Article 99 specifies different fines for different types of violations of the EU AI Act regulations. Overall, the fines can reach “up to 35 000 000 EUR or, if the offender is an undertaking, up to 7% of its total worldwide annual turnover for the preceding financial year, whichever is higher”. “These penalties highlight the EU’s commitment to strict compliance and the protection of individuals’ rights”.

Conslusions

The article addresses two major aspects of the EU AI Act that the GPAI Agent based products should adhere to to operate in the zone of the European Union. These aspects include calculation of risks (in the EU AI Act definition) and conformity with the EU’s requirements for Human Oversight, Transparency, Record Keeping and Safety.

All enumerated requirements are unapparent because the EU AI Act refers to GPAI Agents indirectly, and the industry focuses on the implementation problems, omitting even a possibility of risks for humans. The arguments that any regulations can deprive innovations are either fraudulent or incompetent because any innovation that does not fit with a human-centric approach to personal life and society must be eliminated.

The major conclusions made in the article are listed below:

1. The current version of the EU AI Act contains a few specific statements that preserve risk-as-impact, i.e. considers risk assessment and categorisation after the harm is done to the people.

The article proposes the proactive risk-as-possibility approach.

2. The current version of the EU AI Act concentrates on the high risks, which, in combination with risk-as-impact, makes categorisation ineffective and helpless.

The article proposes applying the categorisation procedure as mandatory to all GPAI Agents and their compositions before their release into the market or usage.

3. The current version of the EU AI Act recognises the risks to the organisations of people — groups, societies, and business companies.

The article proposes applying the categorisation procedure to individuals as well as preserving the human-centric AI ethical principles.

4. The EU’s Risk Categorisation scheme comprises 4 risks, including “Unacceptable” risk.

The article proposes the fifth “Irresolute” risk category for cases where not all of the EU’s compliance requirements are met. This risk must be assessed by the related national surveillance authority and resolved.

5. The article provides a detailed discussion about the use of the GPAI Agents or related compositions in the humanitarian spheres. This leads to two basic utterances correlating with the EU declaration of “ethics by design”:

1) Every GPAI Agent or related composition should be categorised as high-risk by default.

2) Every GPAI Agent or related composition possesses a Systemic Risk by default because an inevitable exposure to people from different cultures has a potential of broad deployment and ethical mismatches with the outcome ethics.

6. Current understanding of the work of a GPAI Agent is set around the autonomy of the agent.

The article outlines that the isolated work of the GPAI Agents in inefficient in several real-world situations, and a collective effort of the GPAI Agents may be required. So, the article considers a soft dependency of each GPAI Agent and addresses a composition of the GPAI Agents as an entity for compliance with the EU AI Act.

7. Configuration and bordering for every GPAI Agent is a mandatory prerequisite for considering compliance with the EU AI Act.

8. The article argues that GPAI Agent categorisation under the EU AI Act is essential, but the result depends on the typal context. That is, the accountability of the provider for a GPAI Agent product or related composition exists and is enforceable before the public use takes place.

9. The regulation rules are unlikely to have retrofire effects unless new risks of a certain type are discovered after the GPAI Agent has been released to the public. The EU AI Act encourages responsibility without discouraging creativity and creates room for correction rather than only punishment.

10. A discrepancy between the overall goal or task for a GPAI Agent or related composition and configured policies, especially over the time of maintenance, requires periodical special thorough testing.

11. The risks (in the EU AI Act’s definition) associated with a stand-alone GPAI Agent and a composition of the GPAI Agents differ since the latter constitutes additional risks to people.

12. Qualitative assessment of the total risk potentially caused by a composition of the GPAI Agents should include aspects of interdependence and interactions. The calculation formulas are provided.

13. The statistical nature of the risk assessment irons out the effect of a single high risk among multiple minimal risks for a GPAI Agent composition. However, the impact of this high risk on a person remains unknown. Thus, without additional investigation, the composition’s risk should be categorised as “Irresolute”.

14. For a stand-alone GPAI Agent calculation, formulas are provided.

15. A presence of an “Unacceptable Risk” among the risk categories in the EU AI Act simply evidences that the risk assessment should be made beforehand, i.e. in a risk-as-possibility manner. Otherwise, serious harm to people may have already been done.

16. Each GPAI Agent requires its own dedicated data store or database for audit and debugging purposes. The recommended type is the hierarchical record-as-a-node data store.

17. A real-time monitoring (with corresponding audible recording) of the internal processing of a GPAI Agent at runtime is mandatory.

18. A Human Oversight in real time over the internal work of the GPAI Agent is not feasible. Only an offline or post-factum analysis of the logged monitoring records is available for the Human Oversight as a compliance with the EU AI Act.

19. A Human Oversight for the composition of GPAI Agents adds more control and needed information on top of the Human Oversight requirements for a stand-alone GPAI Agent.

20. A Human Oversight for the composition requires monitoring of interactions between each pair of A Human Oversight for the composition of GPAI Agents, which can constitute additional risks.

21. The Human Oversight, Transparency, Record Keeping, and Safety assume that interactions with GPAI Agents and resources external to the composition are to be controlled by an authorisation control mechanism. The latter should be responsible for ensuring that the external GPAI Agents and resources are compliant with the EU AI Act as well. Otherwise, the entire composition becomes non-compliant.

22. Compliance with the EU AI Agent for a GPAI Agent obliges the providers to accompany the GPAI Agent with a mechanism to stop the agent’s work. The criteria for applying such a mechanism depend on the provider. This is the mandatory feature that currently “contradicts” the misunderstood concept of autonomy, but it is mandatory in the risk-driven categorisation.

23. A runtime monitoring of communication between the modules of a GPAI Agent is mandatory; it contributes a big deal to all Human Oversight, Transparency and Record Keeping.

24. The Transparency requirement predominantly relates to the GPAI Agent providers — developers, deployers, and assigned stakeholders.

25. The record-keeping requirement supports both the human oversight and transparency requirements. This article argues that the record-keeping requirement should be applied to every GPAI Agent regardless of the risk it is categorised with.

26. The article defines the minimal logging information for individual GPAI agents and for the composition of GPAI agents. The defined logging information should be added to the individual or composition audit data stores, respectively.

27. The article defines a minimal set of risks caused by the very composition of GPAI Agents.

The aspects of Safety in the compliance for both the stand-alone GPAI Agent and related composition are still in flux because “safety” is not defined at all. Setting requirements for a “safety component” in this case makes little sense. Moreover, the personal subjective nature of “emotional harm” is either misunderstood or overstressed. Only the consumer of the agent has the power and natural rights to judge the ethics presented in the outcome and make a decision about what is “good” and what is personal “harm”. No providers, authorities, or governments may dictate to the person any ethical norms.

Overall, the GPAI Agent based products or systems must preserve human interpretive freedom over the outcome content. The risks of stand-alone C and related compositions constitute the subjects of personal ethical interpretation. Attempts to enforce ethics via GPAI Agent through training data, moral constraints, or top-down transparency requirements risk building authoritarian digital regimes that erase personhood and cultural diversity. The proactive risk-as-possibility compliance with the Human Oversight, Transparency, Record Keeping, and Safety converts each GPAI Agent and a composition of GPAI Agents into human-centric ethical instruments.